Lagrange multipliers

subject to a constraint (shown in red)

subject to a constraint (shown in red)  .

.

. The blue lines are contours of

. The blue lines are contours of  . The point where the red line tangentially touches a blue contour is our solution.

. The point where the red line tangentially touches a blue contour is our solution.In mathematical optimization, the method of Lagrange multipliers (named after Joseph Louis Lagrange) provides a strategy for finding the maxima and minima of a function subject to constraints.

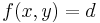

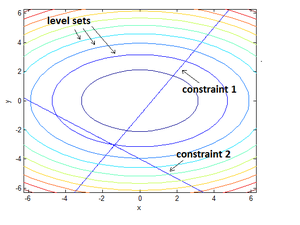

For instance (see Figure 1 on the right), consider the optimization problem

- maximize

- subject to

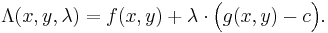

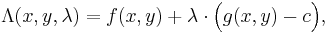

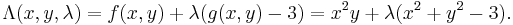

We introduce a new variable ( ) called a Lagrange multiplier, and study the Lagrange function defined by

) called a Lagrange multiplier, and study the Lagrange function defined by

(the  term may be either added or subtracted.) If

term may be either added or subtracted.) If  is a maximum for the original constrained problem, then there exists a λ such that

is a maximum for the original constrained problem, then there exists a λ such that  is a stationary point for the Lagrange function (stationary points are those points where the partial derivatives of Λ are zero). However, not all stationary points yield a solution of the original problem. Thus, the method of Lagrange multipliers yields a necessary condition for optimality in constrained problems.[1]

is a stationary point for the Lagrange function (stationary points are those points where the partial derivatives of Λ are zero). However, not all stationary points yield a solution of the original problem. Thus, the method of Lagrange multipliers yields a necessary condition for optimality in constrained problems.[1]

Contents |

Introduction

Consider the two-dimensional problem introduced above:

- maximize

- subject to

We can visualize contours of f given by

for various values of  , and the contour of

, and the contour of  given by

given by  .

.

Suppose we walk along the contour line with  . In general the contour lines of

. In general the contour lines of  and

and  may be distinct, so following the contour line for

may be distinct, so following the contour line for  one could intersect with or cross the contour lines of

one could intersect with or cross the contour lines of  . This is equivalent to saying that while moving along the contour line for

. This is equivalent to saying that while moving along the contour line for  the value of

the value of  can vary. Only when the contour line for

can vary. Only when the contour line for  meets contour lines of

meets contour lines of  tangentially, we do not increase or decrease the value of

tangentially, we do not increase or decrease the value of  —that is, when the contour lines touch but do not cross.

—that is, when the contour lines touch but do not cross.

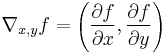

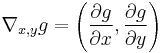

The contour lines of f and g touch when the tangent vectors of the contour lines are parallel. Since the gradient of a function is perpendicular to the contour lines, this is the same as saying that the gradients of f and g are parallel. Thus we want points  where

where  and

and

,

,

where

and

are the respective gradients. The constant  is required because although the two gradient vectors are parallel, the magnitudes of the gradient vectors are generally not equal.

is required because although the two gradient vectors are parallel, the magnitudes of the gradient vectors are generally not equal.

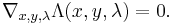

To incorporate these conditions into one equation, we introduce an auxiliary function

and solve

This is the method of Lagrange multipliers. Note that  implies

implies  .

.

Not extrema

The solutions are the critical points of the Lagrangian  ; they are not necessarily extrema of

; they are not necessarily extrema of  . In fact, the function

. In fact, the function  is unbounded: given a point

is unbounded: given a point  that does not lie on the constraint, letting

that does not lie on the constraint, letting  makes

makes  arbitrarily large or small.

arbitrarily large or small.

One may reformulate the Lagrangian as a Hamiltonian, in which case the solutions are local minima for the Hamiltonian. This is done in optimal control theory, in the form of Pontryagin's minimum principle.

The fact that solutions of the Lagrangian are not extrema also poses difficulties for numerical optimization. This can be addressed by computing the magnitude of the gradient, as the zeros of the magnitude are necessarily local minima, and is illustrated in the numerical optimization example.

Handling Multiple Constraints

The method of Lagrange multipliers can also accommodate multiple constraints. To see how this is done, we need to reexamine the problem in a slightly different manner because the concept of “crossing” discussed above becomes rapidly unclear when we consider the types of constraints that are created when we have more than one constraint acting together.

As an example, consider a paraboloid with a constraint that is a single point (as might be created if we had 2 line constraints that intersect). The level set (i.e. contour line) clearly appears to “cross” that point and its gradient is clearly not parallel to the gradients of either of the two line constraints. Yet, it is obviously a maximum *and* a minimum because there is only one point on the paraboloid that meets the constraint.

While this example seems a bit odd, it is easy to understand and is representative of the sort of “effective” constraint that appears quite often when we deal with multiple constraints intersecting. Thus, we take a slightly different approach below to explain and derive the Lagrange Multipliers method with any number of constraints.

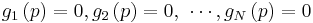

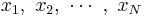

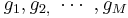

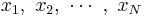

Throughout this section, the independent variables will be denoted by  and, as a group, we will denote them as

and, as a group, we will denote them as  . Also, the function being analyzed will be denoted by

. Also, the function being analyzed will be denoted by  and the constraints will be represented by the equations

and the constraints will be represented by the equations  .

.

The basic idea remains essentially the same: if we consider only the points that satisfy the constraints (i.e. are in the constraints), then a point  is a stationary point (i.e. a point in a “flat” region) of f if and only if the constraints at that point do not allow movement in a direction where f changes value. It is intuitive that this is true because if the constraints allowed us to travel from this point to a (infinitesimally) near point with a different value, then we would not be in a “flat” region (i.e. a stationary point).

is a stationary point (i.e. a point in a “flat” region) of f if and only if the constraints at that point do not allow movement in a direction where f changes value. It is intuitive that this is true because if the constraints allowed us to travel from this point to a (infinitesimally) near point with a different value, then we would not be in a “flat” region (i.e. a stationary point).

Once we have located the stationary points, we need to do further tests to see if we have found a minimum, a maximum or just a stationary point that is neither.

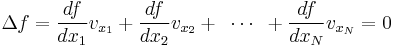

We start by considering the level set of f at  . The set of vectors

. The set of vectors  containing the directions in which we can move and still remain in the same level set are the directions where the value of f does not change (i.e. the change equals zero). Thus, for every vector v in

containing the directions in which we can move and still remain in the same level set are the directions where the value of f does not change (i.e. the change equals zero). Thus, for every vector v in  , the following relation must hold:

, the following relation must hold:

Where the notation  above means the

above means the  -component of the vector v. The equation above can be rewritten in a more compact geometric form that helps our intuition:

-component of the vector v. The equation above can be rewritten in a more compact geometric form that helps our intuition:

![\begin{matrix}

\underbrace{\begin{matrix}

\left[ \begin{matrix}

\frac{df}{dx_{1}} \\

\frac{df}{dx_{2}} \\

\vdots \\

\frac{df}{dx_{N}} \\

\end{matrix} \right] \\

{} \\

\end{matrix}}_{\nabla f} & \centerdot & \underbrace{\begin{matrix}

\left[ \begin{matrix}

v_{x_{1}} \\

v_{x_{2}} \\

\vdots \\

v_{x_{N}} \\

\end{matrix} \right] \\

{} \\

\end{matrix}}_{v} & =\,\,0 \\

\end{matrix}\,\,\,\,\,\,\,\,\,\,\,\,\Rightarrow \,\,\,\,\,\,\,\,\nabla f\,\,\,\centerdot \,\,\,\,v\,\,=\,\,\,0](/2010-wikipedia_en_wp1-0.8_orig_2010-12/I/3625b2c39c79c71f024444235ca2ea18.png)

This makes it clear that if we are at p , then all directions from this point that do not change the value of f must be perpendicular to  (the gradient of f at p).

(the gradient of f at p).

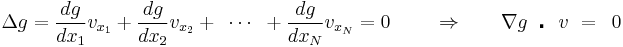

Now let us consider the effect of the constraints. Each constraint limits the directions that we can move from a particular point and still satisfy the constraint. We can use the same procedure, to look for the set of vectors  containing the directions in which we can move and still satisfy the constraint. As above, for every vector v in

containing the directions in which we can move and still satisfy the constraint. As above, for every vector v in  , the following relation must hold:

, the following relation must hold:

From this, we see that at point p, all directions from this point that will still satisfy this constraint must be perpendicular to  .

.

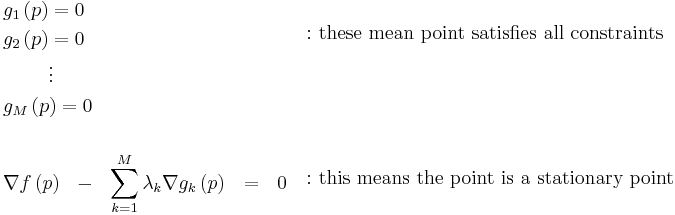

Now we are ready to refine our idea further and complete the method: a point on f is a constrained stationary point if and only if the direction that changes f violates at least one of the constraints. (We can see that this is true because if a direction that changes f did not violate any constraints, then there would a “legal” point nearby with a higher or lower value for f and the current point would then not be a stationary point.)

Single Constraint Revisited

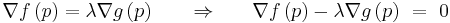

For a single constraint, we use the statement above to say that at stationary points the direction that changes f is in the same direction that violates the constraint. To determine if two vectors are in the same direction, we note that if two vectors start from the same point and are “in the same direction”, then one vector can always “reach” the other by changing its length and/or flipping to point the opposite way along the same direction line. In this way, we can succinctly state that two vectors point in the same direction if and only if one of them can be multiplied by some real number such that they become equal to the other. So, for our purposes, we require that:

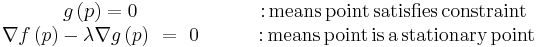

If we now add another simultaneous equation to guarantee that we only perform this test when we are at a point that satisfies the constraint, we end up with 2 simultaneous equations that when solved, identify all constrained stationary points:

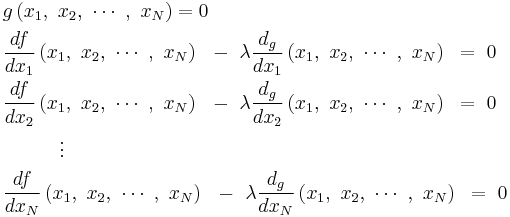

Note that the above is a succinct way of writing the equations. Fully expanded, there are  simultaneous equations that need to be solved for the

simultaneous equations that need to be solved for the  variables

variables  and

and  :

:

Multiple Constraints

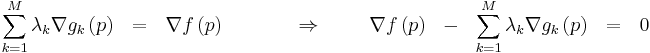

For more than one constraint, the same reasoning applies. If there is more than one constraint active together, each constraint contributes a direction that will violate it. Together, these “violation directions” form a “violation space”, where infinitesimal movement in any direction within the space will violate one or more constraints. Thus, to satisfy multiple constraints we can state (using this new terminology) that at the stationary points, the direction that changes f is in the “violation space” created by the constraints acting jointly.

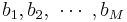

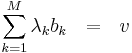

The “violation space” created by the constraints consists of all points that can be reached by adding any combination of scaled and/or flipped versions of the individual violation direction vectors. In other words, all the points that are “reachable” when we use the individual violation directions as the basis of the space. Thus, we can succinctly state that v is in the space defined by  if and only if there exists a set of “multipliers”

if and only if there exists a set of “multipliers”  such that:

such that:

Which for our purposes, translates to stating that the direction that changes f at p is in the “violation space” defined by the constraints  if and only if:

if and only if:

As before, we now add simultaneous equation to guarantee that we only perform this test when we are at a point that satisfies every constraint, we end up with simultaneous equations that when solved, identify all constrained stationary points:

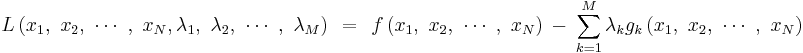

The method is complete now (from the standpoint of solving the problem of finding stationary points) but as mathematicians delight in doing, these equations can be further condensed into an even more elegant and succinct form. Lagrange must have cleverly noticed that the equations above look like partial derivatives of some larger scalar function L that takes all the  and all the

and all the  as inputs. Next, he might then have noticed that setting every equation equal to zero is exactly what one would have to do to solve for the unconstrained stationary points of that larger function. Finally, he showed that a larger function L with partial derivatives that are exactly the ones we require can be constructed very simply as below:

as inputs. Next, he might then have noticed that setting every equation equal to zero is exactly what one would have to do to solve for the unconstrained stationary points of that larger function. Finally, he showed that a larger function L with partial derivatives that are exactly the ones we require can be constructed very simply as below:

Solving the equation above for its unconstrained stationary points generates exactly the same stationary points as solving for the constrained stationary points of f under the constraints  .

.

In Lagrange’s honor, the function above is called a Lagrangian, the scalars  are called Lagrange Multipliers and this optimization method itself is called The Method of Lagrange Multipliers.

are called Lagrange Multipliers and this optimization method itself is called The Method of Lagrange Multipliers.

The method of Lagrange multipliers is generalized by the Karush–Kuhn–Tucker conditions, which can also take into account inequality constraints of the form h(x) ≤ c.

Interpretation of the Lagrange multipliers

Often the Lagrange multipliers have an interpretation as some quantity of interest. To see why this might be the case, observe that:

So, λk is the rate of change of the quantity being optimized as a function of the constraint variable. As examples, in Lagrangian mechanics the equations of motion are derived by finding stationary points of the action, the time integral of the difference between kinetic and potential energy. Thus, the force on a particle due to a scalar potential,  , can be interpreted as a Lagrange multiplier determining the change in action (transfer of potential to kinetic energy) following a variation in the particle's constrained trajectory. In economics, the optimal profit to a player is calculated subject to a constrained space of actions, where a Lagrange multiplier is the increase in the value of the objective function due to the relaxation of a given constraint (e.g. through an increase in income or bribery or other means) – the marginal cost of a constraint, called the shadow price.

, can be interpreted as a Lagrange multiplier determining the change in action (transfer of potential to kinetic energy) following a variation in the particle's constrained trajectory. In economics, the optimal profit to a player is calculated subject to a constrained space of actions, where a Lagrange multiplier is the increase in the value of the objective function due to the relaxation of a given constraint (e.g. through an increase in income or bribery or other means) – the marginal cost of a constraint, called the shadow price.

In control theory this is formulated instead as costate equations.

Examples

Very simple example

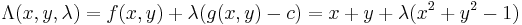

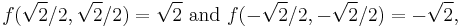

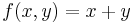

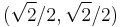

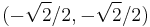

Suppose you wish to maximize  subject to the constraint

subject to the constraint  . The constraint is the unit circle, and the level sets of f are diagonal lines (with slope -1), so one can see graphically that the maximum occurs at

. The constraint is the unit circle, and the level sets of f are diagonal lines (with slope -1), so one can see graphically that the maximum occurs at  (and the minimum occurs at

(and the minimum occurs at  )

)

Formally, set  , and

, and

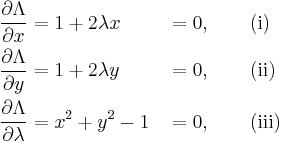

Set the derivative  , which yields the system of equations:

, which yields the system of equations:

As always, the  equation ((iii) here) is the original constraint.

equation ((iii) here) is the original constraint.

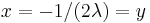

Combining the first two equations yields  (explicitly,

(explicitly,  , otherwise (i) yields 1 = 0, so one has

, otherwise (i) yields 1 = 0, so one has  ).

).

Substituting into (iii) yields  , so

, so  and the stationary points are

and the stationary points are  and

and  . Evaluating the objective function f on these yields

. Evaluating the objective function f on these yields

thus the maximum is  , which is attained at

, which is attained at  and the minimum is

and the minimum is  , which is attained at

, which is attained at  .

.

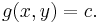

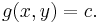

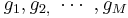

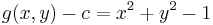

Simple example

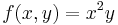

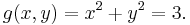

Suppose you want to find the maximum values for

with the condition that the x and y coordinates lie on the circle around the origin with radius √3, that is,

As there is just a single condition, we will use only one multiplier, say λ.

The constraint g(x, y)-c is identically zero on the circle of radius √3. So any multiple of g(x, y) may be added to f(x, y) leaving f(x, y) unchanged in the region of interest (above the circle where our original constraint is satisfied). Let

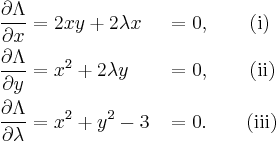

The critical values of  occur when its gradient is zero. The partial derivatives are

occur when its gradient is zero. The partial derivatives are

Equation (iii) is just the original constraint. Equation (i) implies  or λ = −y. In the first case, if x = 0 then we must have

or λ = −y. In the first case, if x = 0 then we must have  by (iii) and then by (ii) λ = 0. In the second case, if λ = −y and substituting into equation (ii) we have that,

by (iii) and then by (ii) λ = 0. In the second case, if λ = −y and substituting into equation (ii) we have that,

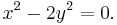

Then x2 = 2y2. Substituting into equation (iii) and solving for y gives this value of y:

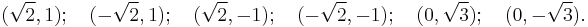

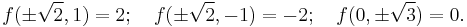

Thus there are six critical points:

Evaluating the objective at these points, we find

Therefore, the objective function attains a global maximum (with respect to the constraints) at  and a global minimum at

and a global minimum at  The point

The point  is a local minimum and

is a local minimum and  is a local maximum, as may be determined by consideration of the Hessian matrix of

is a local maximum, as may be determined by consideration of the Hessian matrix of  .

.

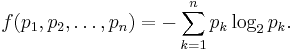

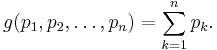

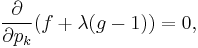

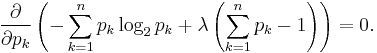

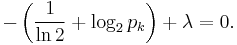

Example: entropy

Suppose we wish to find the finite probability distribution (Without loss of generality, say on the points  ) with maximal information entropy. Then

) with maximal information entropy. Then

Of course, the sum of these probabilities equals 1, so our constraint is g(p) = 1 with

We can use Lagrange multipliers to find the point of maximum entropy (depending on the probabilities). For all k from 1 to n, we require that

which gives

Carrying out the differentiation of these n equations, we get

This shows that all pk are equal (because they depend on λ only). By using the constraint ∑k pk = 1, we find

Hence, the uniform distribution is the distribution with the greatest entropy, among distributions on n points.

Example: numerical optimization

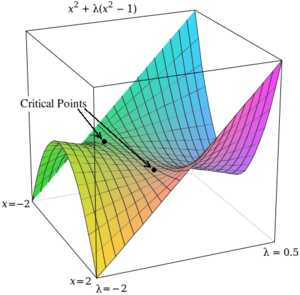

With Lagrange multipliers, the critical points occur at saddle points, rather than at local maxima (or minima). Unfortunately, many numerical optimization techniques, such as hill climbing, gradient descent, some of the quasi-Newton methods, among others, are designed to find local maxima (or minima) and not saddle points. For this reason, one must either modify the formulation to ensure that it's a minimization problem (for example, by extremizing the square of the gradient of the Lagrangian as below), or else use an optimization technique that finds stationary points (such as Newton's method without an extremum seeking line search) and not necessarily extrema.

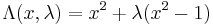

As a simple example, consider the problem of finding the value of x that minimizes  , constrained such that

, constrained such that  . (This problem is somewhat pathological because there are only two values that satisfy this constraint, but it is useful for illustration purposes because the corresponding unconstrained function can be visualized in three dimensions.)

. (This problem is somewhat pathological because there are only two values that satisfy this constraint, but it is useful for illustration purposes because the corresponding unconstrained function can be visualized in three dimensions.)

Using Lagrange multipliers, this problem can be converted into an unconstrained optimization problem:

The two critical points occur at saddle points where  and

and  .

.

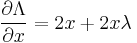

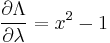

In order to solve this problem with a numerical optimization technique, we must first transform this problem such that the critical points occur at local minima. This is done by computing the magnitude of the gradient of the unconstrained optimization problem.

First, we compute the partial derivative of the unconstrained problem with respect to each variable:

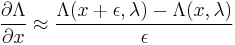

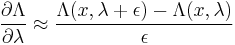

If the target function is not differentiable, the differential with respect to each variable can be measured empirically:

where  is a small value.

is a small value.

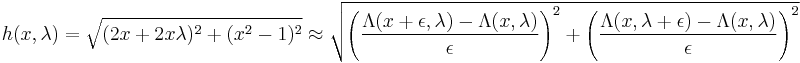

Next, we compute the magnitude of the gradient, which is the square root of the sum of the squares of the partial derivatives:

Alternatively, one may use the magnitude squared, which is the sum of the squares of the partials, without taking a square root – this has the advantage of being smooth if the partials are, while the square root may not be differentiable at the zeros.

The critical points of h occur at  and

and  , just as in

, just as in  . Unlike the critical points in

. Unlike the critical points in  , however, the critical points in h occur at local minima, so numerical optimization techniques can be used to find them.

, however, the critical points in h occur at local minima, so numerical optimization techniques can be used to find them.

Applications

Economics

Constrained optimization plays a central role in economics. For example, the choice problem for a consumer is represented as one of maximizing a utility function subject to a budget constraint. The Lagrange multiplier has an economic interpretation as the shadow price associated with the constraint, in this example the marginal utility of income.

Control theory

In optimal control theory, the Lagrange multipliers are interpreted as costate variables, and Lagrange multipliers are reformulated as the minimization of the Hamiltonian, in Pontryagin's minimum principle.

The strong Lagrangian principle: Lagrange duality

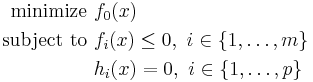

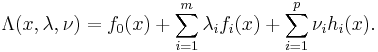

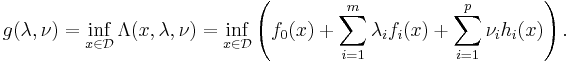

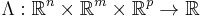

Given a convex optimization problem in standard form

with the domain  having non-empty interior, the Lagrangian function

having non-empty interior, the Lagrangian function  is defined as

is defined as

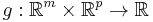

The vectors  and

and  are called the dual variables or Lagrange multiplier vectors associated with the problem. The Lagrange dual function

are called the dual variables or Lagrange multiplier vectors associated with the problem. The Lagrange dual function  is defined as

is defined as

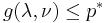

The dual function g is concave, even when the initial problem is not convex. The dual function yields lower bounds on the optimal value  of the initial problem; for any

of the initial problem; for any  and any

and any  we have

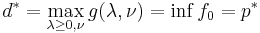

we have  . If a constraint qualification such as Slater's condition holds and the original problem is convex, then we have strong duality, i.e.

. If a constraint qualification such as Slater's condition holds and the original problem is convex, then we have strong duality, i.e.  .

.

See also

- Karush–Kuhn–Tucker conditions: generalization of the method of Lagrange multipliers.

- Lagrange multipliers on Banach spaces: another generalization of the method of Lagrange multipliers.

References

- ↑ Vapnyarskii, I.B. (2001), "Lagrange multipliers", in Hazewinkel, Michiel, Encyclopaedia of Mathematics, Springer, ISBN 978-1556080104, http://eom.springer.de/L/l057190.htm.

External links

Exposition

- Conceptual introduction (plus a brief discussion of Lagrange multipliers in the calculus of variations as used in physics)

For additional text and interactive applets

- Simple explanation with an example of governments using taxes as Lagrange multipliers

- Applet

- Tutorial and applet

- Video Lecture of Lagrange Multipliers

- Slides accompanying Bertsekas's nonlinear optimization text, with details on Lagrange multipliers (lectures 11 and 12)

- http://eom.springer.de/L/l057190.htm